By the time we had three AI agents in production, the shape of the work had surprised us. We had expected most of the hours to go into the agents themselves. Most of them went into preparing the rest of the company so the agents could do their jobs at all.

To make an agent useful in marketing, marketing’s data has to be readable by something other than a marketer. The same applies to finance, sales, customer success, and product. Becoming AI-native is a cross-functional operating change, not a technology project. It means making the data each team owns available to AI agents in a form the agents can pick up, which is rarely the form the team originally built it in for human use.

It means changing processes too. The senior people who used to silently reconcile contradictions between systems have to write those rules down so an agent can apply them. Security and permissions, which most SaaS companies treat as a one-time setup item, become an ongoing review, because every new agent is a new identity in the stack and every identity needs scoped access decided before it touches anything live.

All of this is real work upfront, and most of it does not look like AI work. We have spent more time on it than on the agents themselves. It pays back later, because every artifact built for one agent makes the next one cheaper, and over the past eight months that compounding has been the difference between five agents in production and one impressive demo.

The hidden job nobody talks about

In every company there is a category of work I would describe as keeping the company coherent. Reconciling what the CRM says with what the billing system says. Knowing that “active customer” means one thing on Tuesday’s revenue call and a different thing on Friday’s renewal review. Remembering which Slack channel has the up-to-date list of integrations and which one is the stale one from two reorgs ago.

This work has always existed. It’s invisible because we don’t bill for it, don’t title it, don’t put it on the org chart. It mostly happens in the heads of senior people who have been around long enough to know which sources lie and which are reliable. They are the human cache of how the company actually operates, and they get paid for other things.

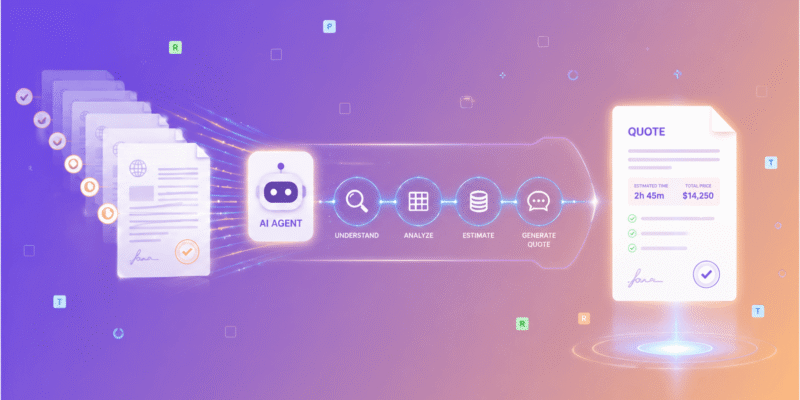

When you put an AI agent into a function, the first thing the agent does is hit that cache and find it isn’t a system. There is nothing to query. There is no document. The agent does the most reasonable thing it can, which is to take the systems at face value, and the answers come out subtly wrong in a way that’s hard to debug because each input system looked correct on its own.

This is the thing nobody briefs you on before you start. The reason your AI agent is producing odd outputs is almost never the model. It’s that you’ve asked the model to do work that, for years, has been done by an unnamed person walking between systems and reconciling them by hand.

What “AI-native” actually demands

The phrase “AI-native company” is doing a lot of work in our industry right now and most of the work is wrong. People hear it and think it means tooling. Better models, better orchestration frameworks, agent platforms, vector databases. Those are real and they help. They are also not what makes a company AI-native.

What makes a company AI-native is that the company has written itself down. Not in a marketing sense. In an operational sense. Somebody in the company has decided, and put in writing, what an account is, what a deal is, what counts as a customer, which system is authoritative when two of them disagree, and who has the authority to revise that decision. The work is unglamorous and it isn’t really an engineering problem. Engineering can build the tables. Engineering cannot decide what an account is. That decision belongs to whoever runs that part of the business.

I keep running into the same surprise when I describe this to other founders. They expect the AI work to be technical. The technical part of every agent we’ve built has been the easy part. The hard part has been getting four people in a room to agree on which system is the source of truth for ARR, and to write the answer down, and to commit to maintaining it when the source changes. That’s not a model problem. It’s a company problem.

The cost is mostly political

If the work were technical, it would be easier. There would be a tool you could buy, a framework you could adopt, a vendor who would do it for you. There isn’t. The work is making explicit a set of decisions that the company has been comfortably avoiding for most of its life, because human operators didn’t need them to be explicit.

Some of those decisions are uncomfortable. When you write down that the SQL warehouse is authoritative for ARR and the CRM is not, the team that maintains the CRM loses something. When you decide that the canonical customer name comes from billing and not from the salesperson who created the account, the salesperson’s autonomy gets a little smaller. None of these are dramatic, but they add up, and somebody has to be senior enough to make the calls and stick with them.

The companies I’ve watched try to skip this step end up in a familiar place. They have impressive demos, real prototypes, sometimes even one agent in production that works for a single team. They cannot scale beyond it because every new agent re-discovers the same disagreements, and every new function head wants to relitigate decisions the previous one made. The company stays illegible. The agents stay shallow. AI shows up as a layer of interesting tools rather than as a way the company runs.

The companies I’ve watched do this well usually have one thing in common, which is that somebody senior has made readability a priority and protected the time to make the decisions. That somebody is almost always the COO or the CEO. It cannot be the CTO alone because most of the decisions aren’t technical. It cannot be a head of data alone because the decisions are about what the business is, not about how to model it.

A different kind of operating discipline

The interesting thing about doing this work is that it doesn’t just make AI agents better. It makes the company better.

When the head of CS and the head of finance sit down to agree on what an active customer is, they discover that they had different definitions, and the difference was costing them on three different boardroom slides. When the head of marketing writes down which campaigns are real and which are dead, the team running them gets clearer goals. When somebody finally writes down which Slack channel has the truth and which one is stale, the new hires onboard faster.

I would go further. I think the work of making a company readable for AI is the closest thing to genuine operational discipline that most software companies have ever attempted. Not because nobody has ever tried before. The COOs at Fortune 100 companies have been doing versions of this for decades. They call it master data management or data governance and they’ve been telling SaaS founders to do it for twenty years. We’ve ignored them, because at our scale you can grow without it. AI is the first thing in the software business that has materially changed that calculation. You cannot grow an agent-driven company on top of a company that hasn’t decided what its own facts are.

What I think happens next

I think over the next few years the companies that succeed at being AI-native will look different from the outside than people expect. They won’t necessarily have the most agents. They won’t have the flashiest demos. They will have unusually clear answers when somebody from the outside asks a basic question about what the company is and how it operates. The clarity will not be PR polish. It will be the residue of having done the work of writing themselves down, because they had to, because their agents demanded it.

I also think the people who turn out to matter most in this transition will not be the engineers building the agents. The engineers are doing real work and the work is not the constraint. The constraint is the small set of people in each function who can decide what the function actually is, and who are willing to write the answer down and defend it. Those people exist in every company, and right now most companies are not asking them to do this kind of work. They will be.

For us, the lesson eight months in is that we underestimated this part by a wide margin. We thought the agents were the hard work. The agents were the easy work. Making the company underneath the agents legible enough to support them turned out to be the actual project, and the part nobody warned us about, and the part that has paid back more than any single agent we’ve shipped.

What’s next

This is the last post in the founding eight-week run of this series. We started with the announcement and walked through five functions where we automated something real. Sales post-call workflow, sales coaching, customer success, marketing, and presales. Underneath all of them is the operating layer this post is about. It is the part nobody wants to talk about and the part that makes the rest possible.

Stay updated on Birdview's AI Automation Journey

This is Part 8 of “Becoming AI-Native,” a weekly series from the Birdview PSA team on restructuring a company around AI workflows. Follow along on LinkedIn.